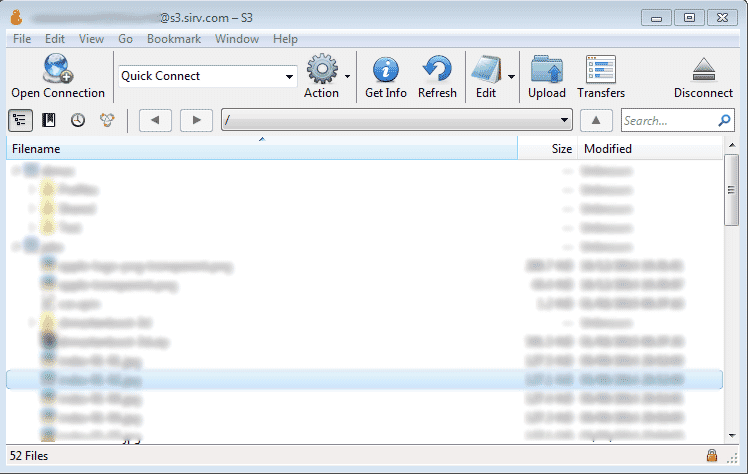

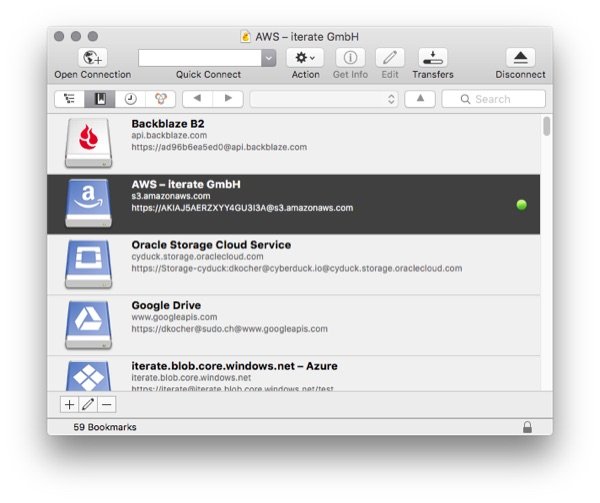

This would be in line with how the AWS SDK behaves. aws configure set s3.maxconcurrentrequests 1000 -profile profile-name. Ive used a few different methods to copy Amazon S3 data to a local machine, including s3cmd. Below is what I've tried so far for listing the bucket contents. But I'm unable to formulate the correct S3 url. s3 URL ( s3://accesskey:secret/s3./bucketname/) Ive tried s3cmd and Cyberduck, but for me awscli was by far the fastest way to download 70.000 files from my bucket. Cyberduck CLI Amazon S3 URL 1 I'm trying to use Cyberduck CLI for uploading/downloading files from Amazon S3 bucket.Minio Server is running on localhost on port 9000 in HTTP, follow Minio quickstart guide for installing Minio. We are downloading HTTP profile for this setup. To use CyberDuck over HTTP, you must install a special S3 profile. Amazon S3 uses Access Control List (ACL) settings to control who may access or modify items stored in S3. Since Minio is Amazon S3 API compatible you will need to download Generic S3 Profiles. Before interacting with S3 you need to setup your credentials. By default, the connection is established over HTTPS. Amazon as a storage provider, we just use Cephs Object Gateway S3 API.

The Access Key ID and, the Password field, the secret access key of an object storage user. Ideally this would also support searching for credentials in the order: Specify your credentials: The DNS name of the S3 endpoint. Is there a way to use this feature in the automation interface ( /script=script.txt)? Even with credentials in the environment, I still get prompted for access key and secret. I wonder if that is needed? I nicer user experience would be to search for the credentials in the order: The current implementation requires to check the box: "Read credentials from AWS CLI configuration". I use aws-vault to load the credentials into the environment. Thanks for sending me the development version.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed